The Rise of AI Agents in Customer Service: What’s Changing in 2026

Agentic AI is now a full market category, projected to grow from about $7.3B in 2025 to over $139B by 2034.

If you zoom out on support in 2026, one pattern stands out: customers don’t remember the chat; they remember the outcome.

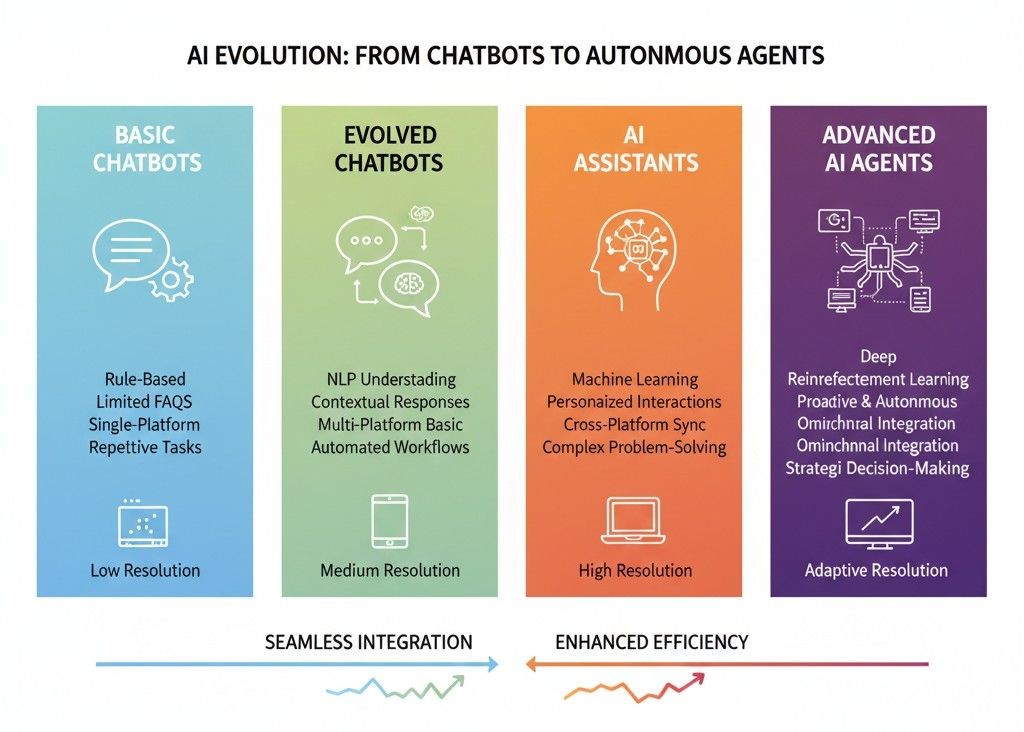

For a decade, we threw chatbots at every support problem. They lowered response times, but didn’t always lower frustration. Customers still had to repeat context. Agents still juggled five tools. Leadership still stared at backlog graphs.

That’s the gap driving the rise of AI agents in customer service. This is simple but brutal: AI is moving from answering questions to owning workflows.

This is exactly where a customer support AI agent built by a company like What AI Services sits: not a shiny toy, not a replacement call center, but a system that answers, routes, updates, and resolves while your humans handle the edge cases.

Why AI agents are suddenly everywhere in support

The economics caught up with the vision

Until recently, “AI agents” were mostly conference talks. Latency was high, integrations were brittle, and every small workflow needed custom engineering.

That changed because:

- •

LLM tooling matured: frameworks now coordinate multi-step actions, not just one-off responses.

- •

Tool-first architectures let models call APIs reliably (CRM, billing, ticketing, logistics).

- •

Unit economics improved: you’re not paying for “chat”, you’re paying for resolved issues at scale.

This is the context behind the AI trend in customer service right now: moving from “can it reply?” to “can it resolve without breaking anything?”.

Customer expectations got sharper

If AI does three things well:

- •

Understand the real problem, not just the words

- •

Pull the right context from systems

- •

Take the right action within policy

How are AI agents used in customer service, day to day?

Think of them as ultra-reliable junior specialists that sit between your customers and your systems. They don’t just chat. They do work.

Investigation across tools

For a typical ticket (“Why was I charged twice?”), an agent can:

- •

Pull past invoices and payment logs

- •

Compare subscription status and usage

- •

Check known incident logs

- •

Confirm whether this is a bug, user error, or legitimate charge

No human has been involved yet, but the issue is basically debugged.

Policy-bound actions

Once the cause is identified, the agent can:

- •

Apply a refund or credit under a defined threshold

- •

Update ticket status and tags in the helpdesk

- •

Add internal notes for future reference

- •

Trigger proactive emails or SMS updates

Proactive interventions

With proper signals, agents can:

- •

Reach out when a shipment is delayed

- •

Flag likely billing issues before renewal

- •

Nudge users when they keep hitting the same error

What AI Services’ AI Customer Support Assistant is positioned exactly here: always-on, connected to tickets, chats and FAQs, acting as a layer that both responds and quietly cleans up operational mess in the background.

Voice is back: AI customer service agent voice

Chat got most of the early hype, but voice is where the pain (and value) is still huge: people call when they’re anxious, angry, or stuck.

A modern AI customer service agent voice setup can:

- •

Answer instantly, 24/7, without queue music

- •

Handle multiple calls at once

- •

Capture structured intent (“refund”, “cancellation”, “reschedule”, “fraud concern”)

- •

Trigger downstream actions or escalate with a proper summary

This is where What AI Services is very on-brand: Our core positioning is an AI voice assistant that never misses a call, syncs with calendars, CRMs and support tools, and feels close to a human receptionist in tone.

If you’re evaluating the best AI agent for customer service, it’s not just “who talks nicest”. It’s:

- •

How deeply does it integrate with our stack?

- •

Can one agent handle both voice and text?

- •

How visible and controllable are its actions

Designing an AI agentic system for customer support (without overcomplicating it)

A big search term right now is designing an AI agentic system for customer support, and honestly, most of the content out there either over-simplifies or over-engineers it.

Here’s the practical model teams are actually using.

Start with one clear outcome

Pick a single workflow with:

- •

Clear start and end (e.g., “refund under $50”, “reschedule appointment”, “where is my order?”)

- •

Stable policies

- •

Reversible actions

Let the customer support AI agent own that fully before you expand.

Separate “thinking” from “doing”

A robust agentic design looks like this:

- •

LLM layer: interprets customer intent, retrieves knowledge, plans steps

- •

Tool layer: calls your CRM, billing system, ticketing tool, logistics, etc.

- •

Policy layer: enforces what’s allowed (amount caps, regions, customer segments)

This is exactly how leading guides describe agentic support: LLMs orchestrating tool-driven workflows, not improvising actions from scratch.

Limit autonomy the smart way

A lot of people worry “How will AI take over customer service?”. The scary version is unchecked autonomy. The real-world version is scoped autonomy:

- •

Green zone: Actions the agent can do fully alone (tagging, FAQ, status checks)

- •

Yellow zone: Reversible actions with logs (small credits, reschedules)

- •

Red zone: Must escalate (identity changes, legal statements, high-value refunds)

KPMG’s CX report literally frames it this way: agentic systems as enablers, not full replacements; the brands that win use AI to amplify human empathy instead of trying to delete it.

What type of AI agents are used in self-driving cars and why??

You’ll sometimes see people ask: this and what does that have to do with support?

Self-driving systems usually break down into:

- •

Perception agents (see the world: lanes, cars, pedestrians)

- •

Planning agents (decide what to do next)

- •

Control agents (turn, brake, accelerate)

- •

Safety agents (override if something looks wrong)

In support, agentic AI in customer service is quietly adopting a similar pattern:

- •

Understanding: detect intent, emotion, urgency

- •

Planning: decide which workflow applies

- •

Execution: call tools, apply changes, update records

- •

Safety: refuse risky actions, escalate with full context

The point isn’t “support is like driving”. The point is: every serious agentic system is layered, observable and interruptible. If your vendor or in-house team can’t show you those layers, you don’t have a production-ready agent, you have a fancy demo.

How can AI improve customer services in a way that’s measurable?

A lot of blogs answer How can AI improve customer services? with vague phrases like “delight” and “enhance CX”. That doesn’t help you justify a budget.

Let’s talk in numbers and operations.

From recent customer-service-focused research and vendor case studies:

- •

Early adopters of agentic support report 50% cuts in time-to-resolution and automation rates as high as 80–90% for specific workflows.

- •

Intercom’s 2026 report shows AI is no longer “nice to have”; 58% of teams say improving customer experience (not just cost savings) is their top AI priority.

That’s the level of improvement a What AI Services-style agent is aiming at: slashing busy-work, tightening resolution loops, and giving your human team fewer but higher-quality conversations to handle.

What is the AI trend in customer service over the next 2–3 years?

- •

From deflection to resolution

Success moves from “tickets avoided” to “issues resolved with no follow-up”.

- •

From bots to agents

You’ll still see chatbots, but the budget and engineering talent are shifting to agentic stacks.

- •

From tool sprawl to unified orchestration

AI doesn’t sit in front of systems; it sits between them, orchestrating workflows end-to-end.

- •

From one AI project to an AI fabric

Support, sales, and operations will share agentic infrastructure rather than building isolated projects.

For a platform like What AI Services, that looks like:

- •

AI voice as the front door

- •

Customer support agents handling tickets and FAQs

- •

Manager / executive assistants coordinating internal work

…all running on a consistent set of patterns and guardrails rather than five disconnected experiments.

So, will AI “take over” customer service?

Based on current data and how enterprises are rolling this out, the honest answer is:

- •

AI will take over repetitive operational work: status checks, structured refunds, reschedules, password workflows, simple configuration issues.

- •

AI will co-pilot complex work: drafting responses, gathering context, suggesting next best actions, summarizing conversations.

- •

Humans will own the hard stuff: escalations, exceptions, emotionally charged cases, strategic accounts, edge cases where judgment, creativity and context matter.

If you’re building your strategy for the next 3–5 years, the smarter question than “Will AI replace agents?” is:

What kind of work do we want humans to spend their time on once the agents are handling the boring parts?

That’s where the real competitive edge will come from.

If you frame your roadmap around agentic systems that resolve, not just bots that respond, you’re already ahead of most teams still stuck in “let’s add a chatbot” mode.

And if you’re running this on top of a platform like What AI Services, you’re not just following the rise of AI agents in customer service, you’re using them to rebuild how your support function works behind the scenes.