AI Voice Agents in Healthcare: What’s Working in 2026 (What Will Get You in Trouble)

(Read this before you buy anything)

If you run a clinic, hospital department, telehealth line, billing team, or patient access center, you already know the ground reality: the phone is still the busiest “system” you have and it’s the least optimized. Patients call for scheduling, pre-auth questions, prescription refills, results follow-ups, directions, cancellations, and “please just explain this bill.” Staff gets buried, hold times creep up, no-shows rise, and suddenly your patient experience is being defined by voicemail.

That’s why interest in an AI voice agent in healthcare has exploded in recent years: it’s one of the few AI use cases that can move the needle fast because it touches volume, cost, and patient satisfaction at the same time. But nobody is going to tell you that healthcare voice AI isn’t “set it and forget it.” The moment you capture health details, recordings and transcripts can become PHI, and your tooling decisions start living under HIPAA rules, vendor contracts, retention policies, and audit trails.

Here, we’ll cover what’s really happening in 2026: the market direction, the best-performing use cases, how personalization works without creeping patients out, and the compliance expectations that separate “cool demo” from “deployable system.” We’ll also show how What AI Services approaches healthcare voice deployments (patient calls, scheduling, and admin workflows) using tight scopes and practical guardrails.

Why Healthcare Voice AI Is Growing So Fast

Healthcare is a perfect storm for voice automation: high inbound volume, high repetition, constant staffing pressure, and huge penalties for errors.

1) In market is no longer a niche

Multiple analysts are now tracking this category as its own segment. One estimate puts the AI voice agents in the healthcare market at about $468M in 2024, projecting rapid growth through 2030.

Even outside pure healthcare, call-center AI growth is also accelerating, which indirectly boosts voice-agent adoption through better infrastructure, cheaper tooling, and better integrations.

2) The ROI is easier to prove than “generic AI”

Voice AI wins when it reduces:

- •

Missed calls and abandoned calls

- •

Appointment no-shows

- •

Admin time per patient interaction

- •

After-hours overflow burden

And unlike many AI projects, you can measure these outcomes in weeks.

3) Big tech normalized “voice AI in clinical workflows”

Tools like Microsoft’s Dragon Copilot pushed mainstream visibility for voice-driven clinician workflows (documentation, summarization, and related automation).

This creates “exec permission” for voice AI budgets even if your use case is patient access, not clinical dictation.

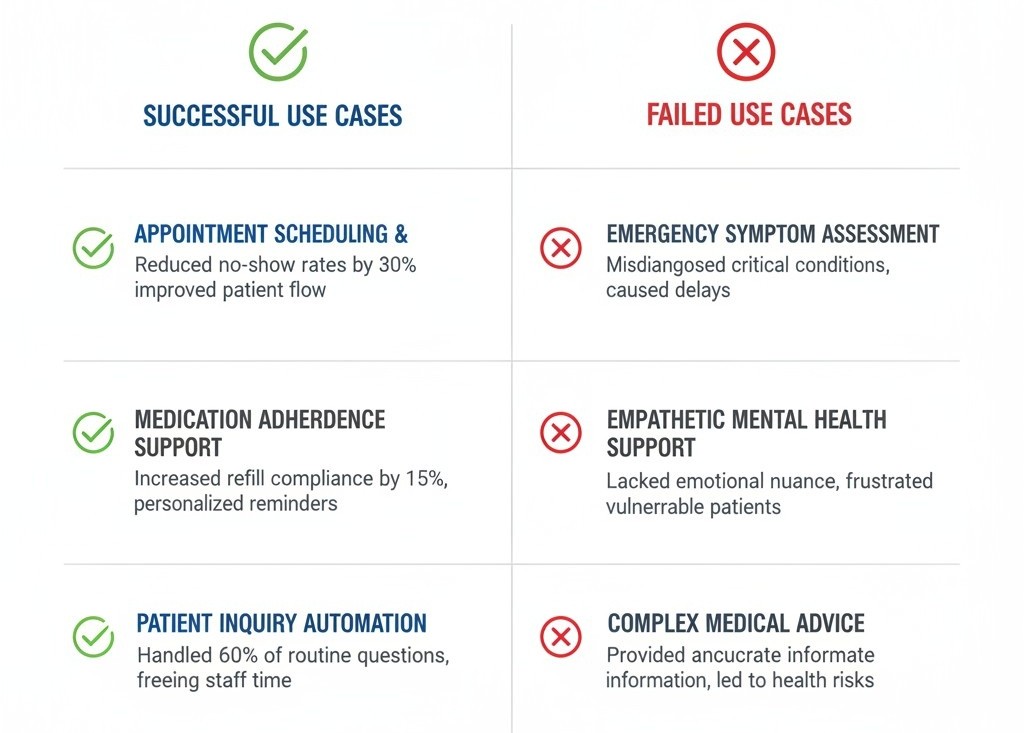

The 6 Winning Use Cases (& the 3 That Usually Fail)

Most “voice calling” projects fail because teams start with the most complex clinical scenarios first. Don’t do that.

These 6 use cases perform best:

- •

Scheduling + rescheduling + cancellations

This is the cleanest starting point: high volume, structured outcomes, easy integrations.

- •

Appointment confirmations + reminders

This is where teams chase measurable “no-show” improvement. Your message timing, retries, and escalation rules matter more than your AI’s personality.

- •

Insurance & billing routing (not full billing advice)

Voice AI can:

- •

Collect basic information

- •

Route to correct department

- •

Offer payment link workflows (if compliant)

It should not freestyle billing explanations without guardrails.

- •

After-hours triage (tight scope)

Not “diagnose.” Think: gather symptoms, identify red flags, route appropriately, log everything.

If you cross into decision support, you must treat this as higher risk and align to relevant guidance for clinical decision support software.

- •

Prescription refill requests (workflow collection)

Collect: patient identity, medication name, pharmacy, urgency, and route to your refill protocol.

- •

Results follow-up and admin FAQs

If your EHR portal already has the information, your agent can help patients reach it, or route to a human.

These 3 use cases usually fail (early)

- •

“Full clinical diagnosis by phone”

- •

“Open-ended medical advice”

- •

“Anything that changes treatment plans without a clinician’s review”

If you want to go there later, fine but start with admin and access workflows first.

What “Best” Looks Like in 2026: Our Evaluation Checklist

People search for “tools.” What they need is a selection framework.

Below is a quick scoring table you can use in procurement and pilots.

What to Evaluate | What “Good” Looks Like | Why It Matters |

Accuracy under noise | Works with background noise, accents, rushed speech | Healthcare calls are messy |

Tool + EHR integration | Clear integration approach with scheduling/EHR/CRM workflows | Voice AI alone isn’t useful |

PHI controls | Minimal collection, redaction options, retention controls | Reduces compliance exposure |

Vendor contracting | Will sign a BAA when applicable | Non-negotiable in many setups |

Audit trail | Logs for who/what/when + escalation outcomes | You’ll need this for trust |

Human handoff | Seamless transfer + transcript/context pass | Avoids “start over” rage |

Fail-safes | Clear “I’m not a clinician” boundaries + emergency routing | Safety + liability |

This is the moment where most teams ask for best voice ai agents in healthcare lists but a list is useless if the product can’t integrate with your appointment flow and documentation habits.

Personalization Without the “Creepy” Factor

Everyone wants personalization. In healthcare, sloppy personalization becomes a trust problem.

What personalization should do

AI voice agents in healthcare personalization should focus on:

- •

Remembering preferred appointment windows (morning/evening)

- •

Language and pronunciation preferences

- •

Context that improves routing (“last time you called about billing”)

- •

Communication preferences (SMS follow-up vs email)

What it should NOT do

- •

“I noticed you have diabetes…” unless you have explicit consent and a validated clinical workflow

- •

Surfacing sensitive history without patient prompting

- •

Assuming identity from voice alone (bad idea in fraud scenarios)

Also: voice fraud is rising in contact centers, and healthcare is not immune. Don’t treat “sounds like the patient” as authentication. Build step-up verification rules.

Regulations: The Stuff You Can’t Afford To Ignore

This section is where teams either become deployable… or become a headline.

HIPAA: treat voice + transcripts like sensitive data

HIPAA sets national standards for protecting PHI and applies to covered entities and business associates.

If your system records calls or stores transcripts tied to a patient, you’re likely dealing with PHI handling obligations.

Business Associate Agreements (BAAs)

If a vendor creates, receives, maintains, or transmits PHI on behalf of a covered entity, HIPAA generally requires business associate contracts to define and enforce safeguards.

Security Rule (and the reality about encryption)

The Security Rule sets administrative, physical, and technical safeguards for ePHI.

Encryption is “addressable” (not automatically mandatory), meaning you assess risk and implement it if reasonable/appropriate and if you don’t, you document alternatives.

In practice, almost everyone encrypts anyway because it’s the baseline expectation for vendor trust.

FDA considerations when your voice agent crosses into clinical decision support

If your voice workflow begins to recommend decisions (not just collect info and route), you should understand where it may fall under Clinical Decision Support concepts and whether any device-like oversight applies. FDA has updated guidance in early 2026 clarifying CDS scope.

FDA has discussed total product lifecycle thinking for GenAI-enabled products, emphasizing ongoing management because outputs can vary and models can change.

EU AI Act timelines (if you serve EU patients)

If you operate in Europe or serve EU residents, the EU’s AI framework sets obligations and timelines with high-risk requirements staged to come into effect from 2026 onward. Keep an eye on shifting policy discussions too, because regulators and industry have debated timing and enforcement sequencing.

Voice AI agents in healthcare regulations isn’t a single checklist; it's a mix of privacy, security, vendor contracting, auditability, and careful scoping so your system doesn’t drift into medical decision territory unintentionally.

“2025 vs 2026”: What Changed in Deployment Expectations

A lot of teams ran pilots last year.

In 2026, the bar is higher.

Here’s the shift:

- •

2025: “Does it sound human and answer calls?”

- •

2026: “Can we prove what it did, why it did it, and that it stayed inside the scope?”

AI voice agents in healthcare 2025 was the year of pilots, while 2026 is the year of operational discipline: governance, integration depth, and measurable outcomes.

Also, market research firms now explicitly project 2025 and 2026 as ramp years for this category.

What AI Services Approach: “Voice First, Workflow Second, Autonomy Last”

- •

Voice is the interface: The real product is the workflow

Our healthcare voice deployments focus on reducing admin load through:

- •

24/7 availability

- •

Handling multiple calls simultaneously

- •

Capturing and qualifying inquiries

- •

Integration with scheduling/CRM/EHR-adjacent tools where applicable

- •

We start with safe, high-volume outcomes

Scheduling, confirmations, admin intake, routing, and after-hours protocols not open-ended medical advice.

- •

We design guardrails like we care about consequences

Clear escalation rules, transcript handoff, consent-aware flows, and structured data capture so your staff isn’t cleaning up after the AI.

This is where our voice calling AI agent in healthcare becomes a practical tool not for “replacing staff,” but for catching calls, reducing friction, and keeping patients moving through your intake and scheduling flow.

Implementation: How to Launch Without Chaos

Step 1: Pick one workflow with a clean “success definition”

Example: “book appointment,” “reschedule,” “route billing inquiry.”

Step 2: Map your call intents

Don’t guess. Pull call logs, tag top reasons for calling, and design flows around the top 10.

Step 3: Decide what data you will collect (and what you won’t)

Minimize collection. Define retention. Define who can access recordings/transcripts.

Step 4: Build the handoff experience

If the AI escalates, the human should receive:

- •

Reason for call

- •

Key captured details

- •

Any scheduling attempts or outcomes

Step 5: Pilot with tight monitoring

Track:

- •

Containment rate (calls resolved without human)

- •

Time-to-resolution

- •

No-show rate impact

- •

Patient satisfaction signals

- •

Escalation accuracy

Step 6: Expand slowly

Add one workflow at a time. This is how you avoid “agent sprawl".