AI Assistants vs. AI Agents: Understanding the Key Differences

In 2023–2024, everything was a “chatbot.”

By 2025, everything became an “AI assistant.”

In 2026, every second pitch deck now screams “agents” and “agentic AI.”

On the surface, it all looks the same: LLM in the middle, some tools around it, a chat-style interface on top. But if you’re making decisions about where to invest, what to ship, and how to keep your environment safe, “ai agent vs ai assistant” is not a semantic question. It’s architectural, operational, and commercial.

Let’s see what the industry “bigwigs” are saying about it

- •

IBM frames AI assistants as reactive systems that respond to user requests, while AI agents are proactive, goal-driven systems that can act autonomously to achieve outcomes.

- •

Other platforms describe agents as having both the ability and the authorization to execute actions in tools and systems, whereas assistants mainly advise humans, who still press the buttons.

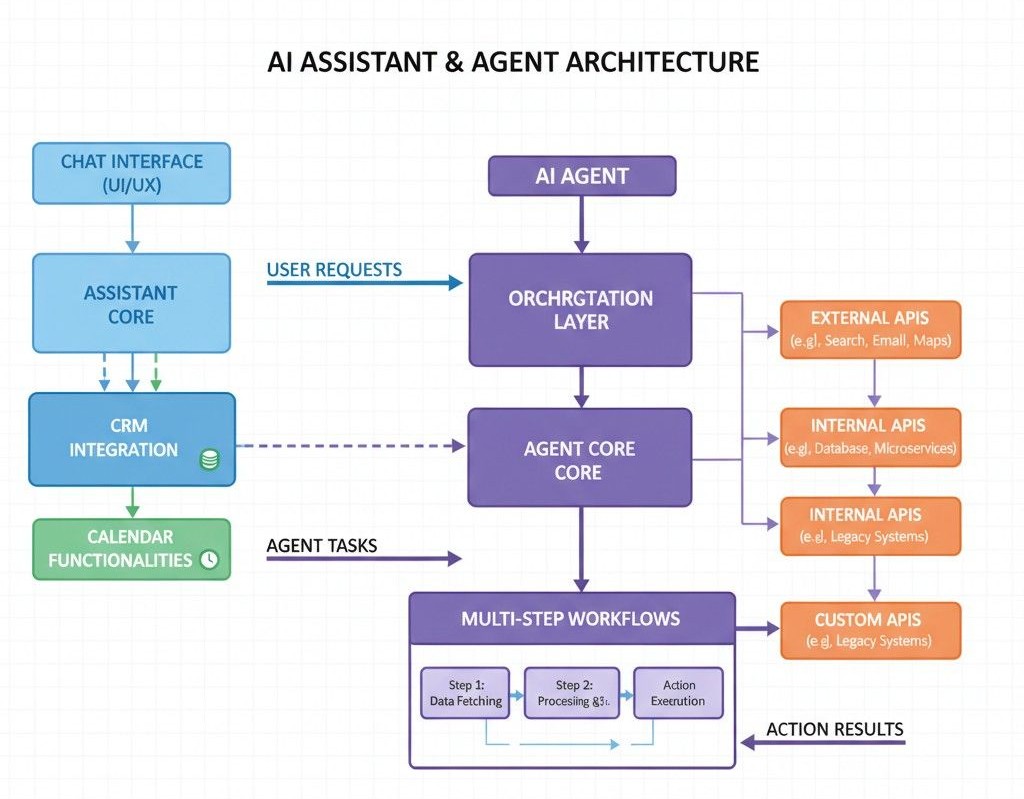

At the same time, “agentic AI” has become shorthand for multi-step, autonomous workflows where many agents coordinate through an orchestration layer, hitting APIs, CRMs, ticketing tools, and internal systems without humans in the loop for every step.

This isn’t just a tech trend. Agentic systems introduce new governance, security and reliability questions: their ability to plan and act makes them powerful and riskier. Analysts are already warning that opaque decision-making, indeterministic behaviour, and blurred data-access boundaries are slowing enterprise adoption of agentic AI.

We have tried our best to include everything in this article for people building or buying AI inside real businesses. We’re not going to rehash basic definitions. Instead, we’ll look at:

- •

How assistants and agents differ in practice

- •

What “agentic AI vs AI assistant” means in 2026

- •

How those differences show up in architecture, governance, metrics, and buying decisions

- •

How What AI Services thinks about assistants and agents when we design voice and virtual AI for clients

Assistants vs Agents: The Real Difference Under the Hood

From a user’s perspective, assistants and agents can both sit in chat, voice, or UI. The difference isn’t the interface, it’s the relationship between who decides, who acts, and who is on the hook when things go wrong.

AI Assistants: Reactive, Embedded, Human-Led

Modern AI assistants (like the ones we build at What AI Services for voice and HR) are usually:

- •

Reactive, not self-initiating They wait for the user, an event, or a clear trigger. They don’t wake up and decide to “go optimize your pipeline.”

- •

Scoped to a clear role or surface Answering calls, triaging HR questions, summarising emails, prepping meeting notes, helping with donor outreach, etc.

- •

Human-centred in their control loop Even when they automate pieces (e.g., booking meetings or sending follow-ups), there’s an explicit design around what they can and cannot do without confirmation.

Architecturally, an assistant is often “just” an LLM wrapped with:

- •

A few well-defined tools (calendar, CRM, ticketing)

- •

Guardrails for language, tone, and compliance

- •

Logging and observability at the interaction level

Think of assistants as AI-native teammates who help, but don’t own strategy or broad goals.

AI Agents: Goal-Driven, Tool-Using, System-Led

AI agents, in the current industry usage, are systems that:

- •

Take a goal and pursue it across steps, without continuous human prompting

- •

Use tools and APIs to act; update CRM, move money between accounts in a sandbox, create or resolve tickets, configure systems

- •

Adapt plans based on feedback from the environment (errors, new data, changing state)

Where a good assistant might say,

“Here are three ways to fix this customer issue.”

…an agent is allowed to say,

“I’ve already implemented option two: the invoice is corrected, the ticket is updated, and the customer has been notified.”

That autonomy is where the “ai agent vs assistant” line really sits. Agents are not just advising humans; they’re acting on behalf of your organisation.

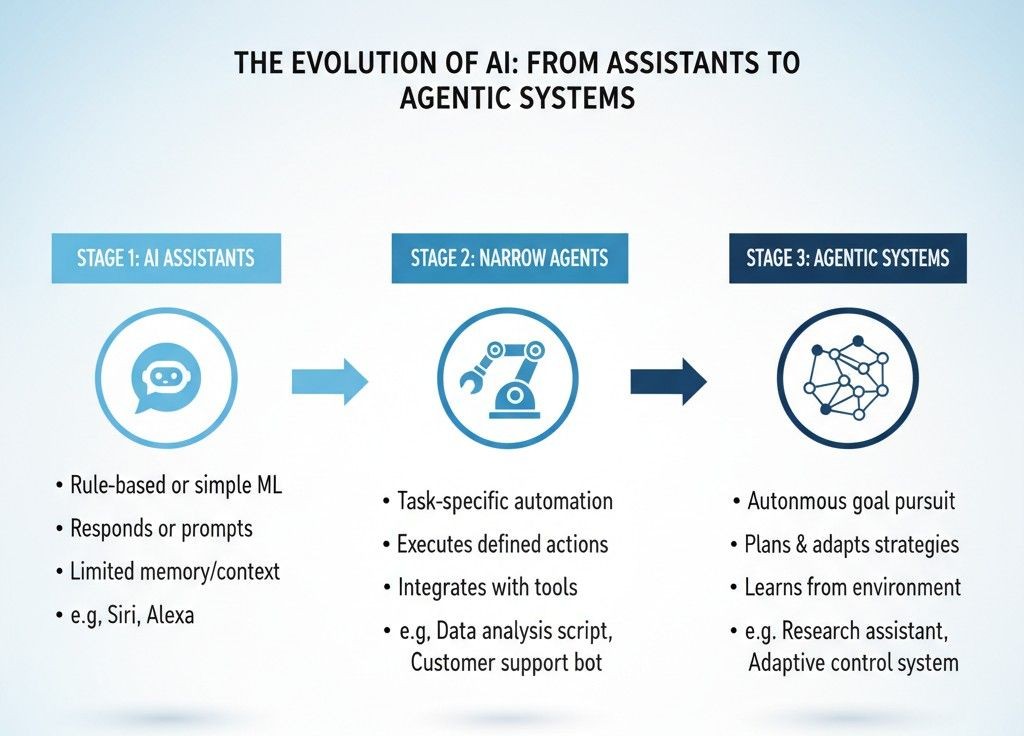

Where “Agentic AI” Fits In

You’ll see “agentic AI” thrown around a lot, sometimes as if it’s just a cooler synonym for agents. It’s not.

Systems of Agents, Not Just One Smart Bot

Agentic AI, in serious 2026 usage, usually means:

- •

A system of many AI agents, each handling a subtask

- •

An orchestration layer that plans, sequences and coordinates these agents

- •

The ability to set or interpret goals, then execute multi-step workflows that cross tools, teams, and data sources

For example: “Investigate this customer’s churn risk and propose a save action” might involve:

- •

One agent pulling product usage

- •

Another pulling billing data

- •

Another analysing support tickets

- •

Another drafting a personalised outreach plan

- •

The orchestration layer deciding the sequence and deciding when to stop

That’s agentic AI.

By contrast, even a sophisticated assistant embedded in your inbox is still just that: an assistant. It doesn’t coordinate a multi-agent team behind the scenes unless you explicitly architect it that way.

Why This Matters for Strategy

The agent vs assistant AI decision isn’t “Do we want something smarter?” It’s:

- •

Do we want AI that mostly helps humans think and act faster?

- •

Or do we want AI that directly operates systems and processes with limited oversight?

In other words

Assistants = leverage human operators.

Agentic systems = become operational infrastructure.

Practical Differences You’ll Experience Inside the Business

Let’s move from theory to how this plays out when you’re building, buying, and governing.

Architecture & Data Access

Assistants:

- •

Often live “close to the interface”: phone calls, chat widgets, inboxes, portals

- •

Use a small number of well-scoped tools (calendar, CRM lookup, ticket creation)

- •

Data access is narrower and easier to reason about

Agents:

- •

Sit closer to your systems of record and orchestration layer

- •

May chain multiple tools: CRM > billing > inventory > email > internal APIs

- •

Need much more careful separation between data they can see and actions they can take

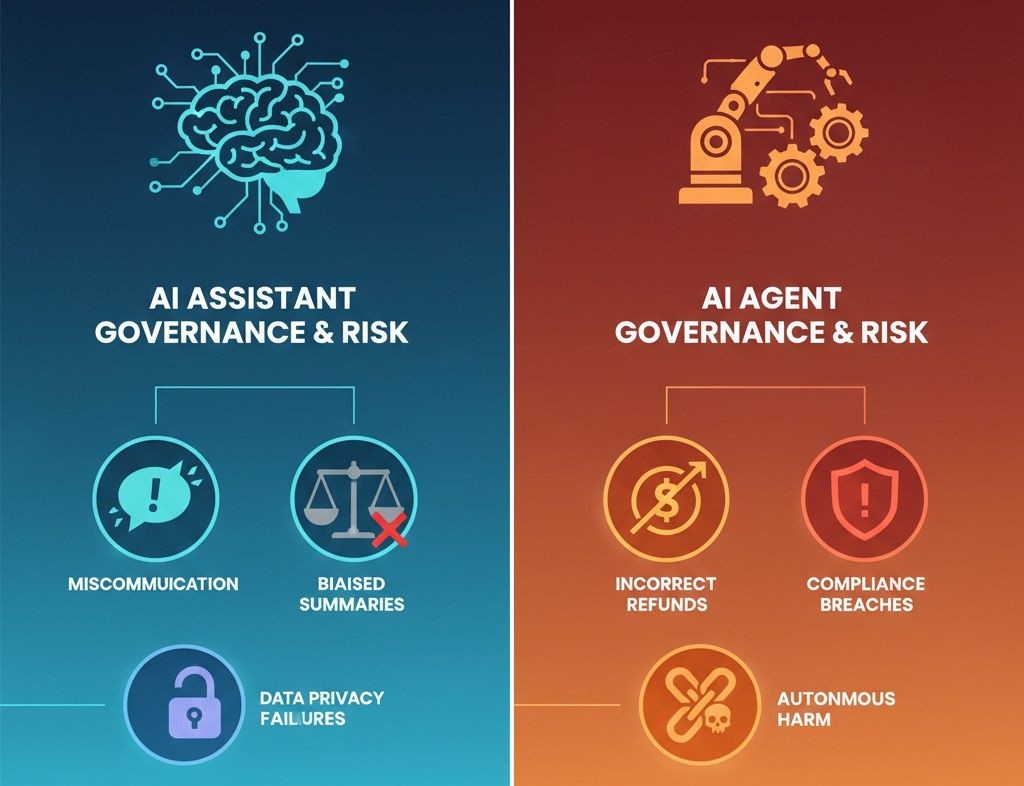

Governance & Risk

With an assistant, risk is often about miscommunication:

- •

Did it phrase something poorly?

- •

Did it summarise something in a biased or incomplete way?

With agents, risk is about consequence:

- •

Did it refund the wrong amount?

- •

Did it change access permissions incorrectly?

- •

Did it trigger an action that has compliance or legal implications?

That’s why enterprises are suddenly deeply focused on:

- •

Fine-grained permissions and roles for agents

- •

Audit trails that show what the agent did and why

- •

Strong boundaries between reading data and taking action

If you’re evaluating AI assistants vs agents for a new initiative, you should be evaluating governance tooling at the same time, not just model quality.

Metrics & Ownership

Assistants are usually owned by line-of-business teams: Support, Sales, HR, Operations. Success looks like:

- •

Reduced handling time

- •

Better CSAT or NPS

- •

Higher agent/employee productivity

Agents and agentic systems, in contrast, increasingly sit closer to:

- •

Platform / architecture teams

- •

Security / risk functions

- •

Central AI or automation teams

Success looks more like:

- •

End-to-end cycle time for a workflow

- •

Percentage of fully-automated vs human-in-the-loop jobs

- •

Incident rates & rollback frequency

Different metrics = different leadership conversations.

How to Decide: Assistant First, Agent Later (Most of the Time)

The temptation in 2026 is to jump straight into agents because that’s where the hype is. In practice, for most organisations, the smart path is assistant > narrow agent > agentic system, not the other way around.

When an Assistant Is Exactly What You Need

Choose an assistant when:

- •

The main problem is cognitive or communication load. Too many calls, emails, requests, simple questions

- •

You want to standardise and speed up human work, not replace it

- •

You can get 80% of the value from better triage, drafting, answering, routing

This is where What AI Services is helping businesses:

- •

AI voice assistants that answer and route calls 24/7

- •

HR assistants that triage staff queries and surface policies

- •

Nonprofit assistants that help with donor engagement and admin

All of these can be powerful without giving an LLM the keys to your core financial or access-control systems.

When It’s Time to Think in Agents

You move toward agents when:

- •

You have repeatable, high-volume workflows that follow clear rules

- •

The cost or delay of keeping humans in the loop is meaningfully hurting outcomes

- •

You can clearly define what “good” looks like (success criteria, constraints, fallbacks)

Typical examples:

- •

Closing out low-risk support tickets end-to-end

- •

Handling simple billing adjustments under a threshold

- •

Approving standard vendor onboarding steps once checks pass

Here, your design conversation becomes:

“How much autonomy can we safely give this agent, on which subset of tasks, behind which guardrails?”

When Agentic Systems Make Sense

Full agentic AI; multi-agent systems orchestrating across departments makes sense when:

- •

You’re ready to invest in serious AI operations (observability, security, platform work)

- •

You have executive buy-in for AI as infrastructure, not just a feature

- •

You’re past the “one clever bot” phase and need coherent automation across teams

If you’re not there yet, that’s fine. You don’t get extra points for calling everything “agentic” before your foundation is ready.

Old Language, New Reality: Assistants, Agents, and “Virtual Agents”

One reason there’s confusion is that we’ve recycled terms from earlier waves.

- •

“Virtual agents” used to refer to scripted or rules-based chatbots sitting in help centres.

- •

“Assistants” were often seen as friendlier versions of the same idea.

Now you have LLM-native assistants that actually reason over unstructured data, and agents that plan, call tools, and act. Articles debating “AI assistant vs virtual agent” often come from older bot-era content and don’t map neatly to 2026 architectures.

The practical approach:

- •

Treat “assistant” as your user-facing layer (voice, chat, portal).

- •

Treat “agent” as the execution layer that operates workflows behind the scenes.

In other words: the assistant is who the user talks to; the agents are who the assistant delegates work to.

How What AI Services Thinks About Assistants and Agents

At What AI Services, we build AI voice and virtual assistants that sit at the front door of your operations; answering calls, triaging requests, handling admin and we increasingly connect them to agentic workflows under the hood where it’s safe, useful, and measurable.

A few principles we keep coming back to when navigating AI assistants vs AI agents for clients:

Assistants Own the Relationship, Agents Own the Routine

- •

Assistants are designed to feel predictable and reliable to users, whether that’s a customer on the phone or an employee asking HR a question.

- •

Agents are designed to quietly execute routine tasks with clear success criteria: update records, move information, close loops.

We rarely expose raw agents directly to end users. We let assistants orchestrate them.

Autonomy Is Earned, Not Granted by Hype

We use a staged approach:

- •

Observe: let the assistant suggest actions, humans still click.

- •

Co-pilot: let the agent perform low-risk actions automatically.

- •

Operate: extend autonomy step-by-step once data proves reliability.

This keeps you out of the “one agent did something wild in production” headlines that are starting to appear as agentic AI spreads.

Design for Human Trust and Operational Reality

Because our core products are AI voice assistants and domain-specific helpers (HR, nonprofits, and more), we constantly balance:

- •

Latency vs quality (especially on calls)

- •

Personalisation vs privacy

- •

Automation depth vs explainability for teams who have to live with these systems

If you’re trying to decide between an AI agent vs assistant for a new initiative, this is the lens we’d encourage you to use too: What does real life look like for the humans around this system?

So, What Should You Do Next?

If you’re at the stage of searching “agent vs assistant AI”, you’re probably somewhere between:

- •

“We have a few basic assistants running. What's next?”

- •

“Our team wants agents; our security lead is nervous; our CFO wants a plan.”

A more sensible path looks like this:

- •

Start with assistants where the stakes are communication, not irreversible actions.

- •

Identify 1–2 workflows where narrow agents could safely close the loop.

- •

Invest in observability, permissions, and clear rollback stories before you scale autonomy.

- •

Treat “agentic AI” as an architectural direction, not a marketing label.

And if you’d rather not debug this alone:

- •

What AI Services can help you audit your current AI landscape

- •

Decide where assistants are enough and where agents make sense

- •

And design voice and workflow AI that fits your risk, revenue, and operational reality.